Physical Address

London, UK

Physical Address

London, UK

The Myth of the “Activity” Dashboard: Why Your Test Metrics Are Answering the Wrong Question

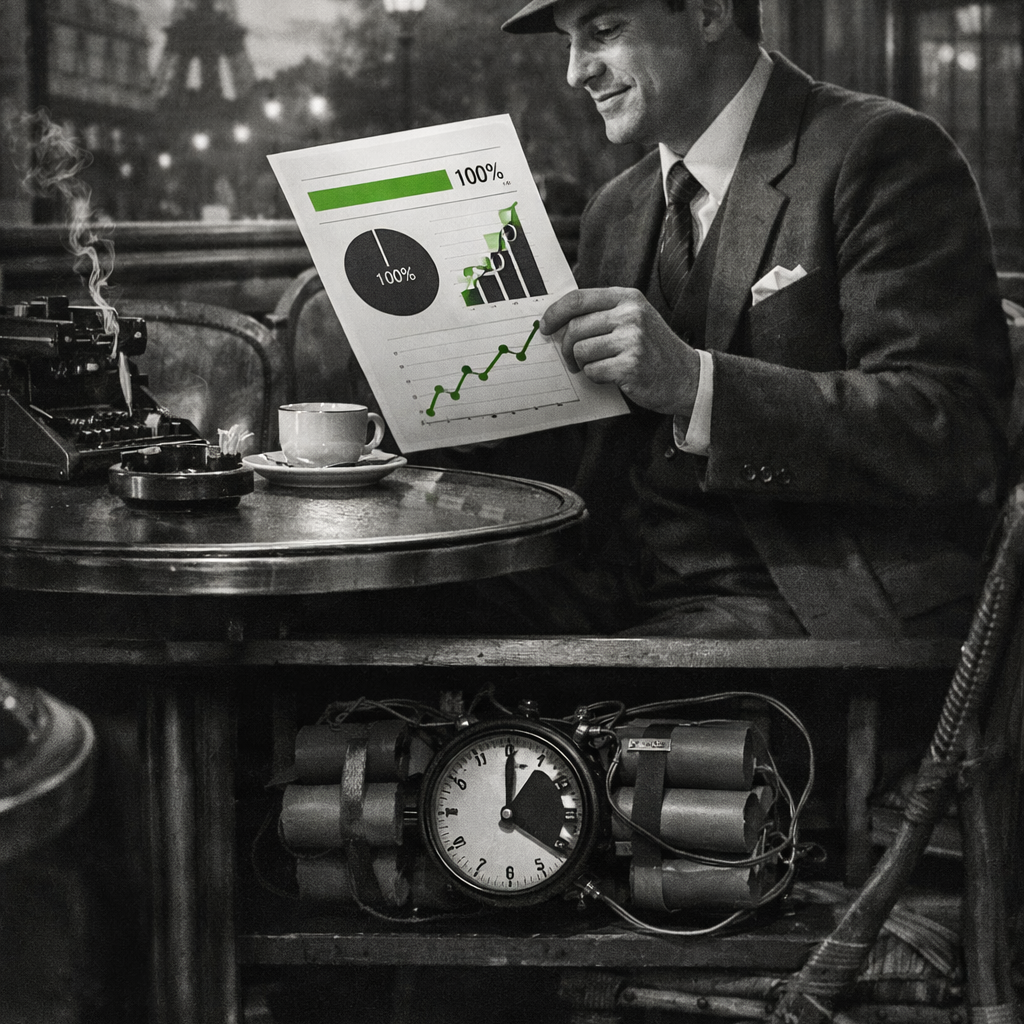

Your testing dashboard glows green. 95% code coverage. 98% test pass rate. Requirements traceability: complete. Leadership breathes easier. The business carries on. Launch day arrives.

Customer complaints spike. Conversion craters. Market share bleeds. Brand reputation: damaged. Competitive advantage: lost. CFO demands answers. Board wants explanations. The post-mortem begins.

Here’s the uncomfortable truth: those green lights weren’t measuring quality. They were measuring activity. And confusing the two just cost you millions—whilst your dashboard kept glowing green right up until the moment it mattered.

The Comforting Illusion of Certainty

Unfortunately, history is littered with companies that were measuring the wrong thing, and it came back to haunt them in a very real and painful way.

Let’s take the example of TSB. In April 2018, the team behind TSB’s core banking migration were posting champagne photos on LinkedIn, calling themselves “champions.” They’d just completed the migration of 5.4 million customer accounts from Lloyds’ legacy platform to a new system called Proteo4UK. The data migration was complete. The technical checkpoint had passed. By every project metric they were tracking, the job was done & the dashboard flashed Green.

Hours later, the platform crumbled. Up to 1.9 million customers were locked out of their accounts. Money disappeared from balances. Some customers could see other people’s account details. The cost? Over £330 million in compensation, fraud losses, emergency remediation, and foregone income. The CEO lost his job. The regulator eventually fined the bank £48.65 million. TSB swung from a £162.7 million profit to a £105.4 million loss in a single year.

When investigated, it emerged that due to a fixed launch timetable compromises had been made. The testing had never taken place in a live environment. There was too much quality debt being “OK’d” (over 2,000 system defects had been uncovered during the project—but only 800 dealt with) to keep the project on track and reporting Green. The team had been measuring project completion. Nobody was measuring risk.

The project dashboards hadn’t lied, exactly. They’d told TSB precisely what they’d been asked to measure: milestones completed, data migrated, code deployed. But they hadn’t told leadership what they needed to know—whether the system would actually work when millions of customers needed it.

When Measurement Becomes Performance Theatre

Software projects in large enterprise organisations are rarely simple. They are complex, interdependent systems involving multiple teams, technologies, suppliers, and stakeholders. The number of unknowns makes it impossible to meaningfully reduce quality to a small set of linear, binary metrics.

The problem isn’t just that teams measure the wrong things—though they often do. The deeper issue is treating testing like manufacturing, where activity equals progress and volume implies value. In that mental model, more tests, more automation, and higher coverage must mean higher quality.

But testing doesn’t work that way.

In manufacturing, more activity means more output. In testing, more activity might mean less insight.

A line of code executed is not a risk explored. A test passed is not a scenario validated. A green dashboard is not confidence to release.

Code coverage is a perfect example. It tells you which parts of the code were executed during testing—but execution alone doesn’t imply correctness. You can achieve 100% line coverage whilst completely missing critical decision paths, untested exception flows, concurrency issues, or edge cases. In practice, the most damaging defects tend to hide precisely in these areas: branching logic, error handling, and rarely exercised conditions.

Despite this, organisations continue to set arbitrary coverage targets—70%, 80%, 95%—as if quality were a percentage that could be calculated rather than a risk that must be understood.

Let’s Be Clear About Traditional Metrics

Before going further, let me acknowledge something important: traditional test metrics aren’t useless.

Test execution counts, defect totals, coverage percentages—they all serve legitimate purposes. They help justify departmental budgets. They demonstrate capacity and activity to stakeholders. They meet expectations shaped by decades of industry practice. For a test manager reporting to finance, “We executed 1,000 tests, found 100 defects, achieved 85% coverage for £100,000” provides tangible evidence of value and return on investment.

The problem isn’t that these metrics exist. The problem is what happens when organisations stop there—when they mistake activity measurement for risk intelligence, when they confuse counting stones turned over with understanding the landscape beneath.

The Dashboard Hierarchy: What to Measure, and Why

The solution isn’t abandoning traditional metrics. It’s understanding what each layer tells you—and what it doesn’t.

Tier 1: Strategic Risk Intelligence (for leadership decision-making)

Tier 2: Operational Activity (for budget justification and capacity planning)

Tier 3: Technical Hygiene (for team improvement)

Traditional “vanity metrics” sit in Tier 2. They’re necessary. They’re defensible. They help CFOs understand what their investment buys. The finance director needs to see 1,000 tests executed to justify your department’s budget.

The danger is when organisations only report Tier 2 to leadership who desperately need Tier 1. When your dashboard says “95% tests passed” but can’t answer “what business risk did those tests actually mitigate?”—that’s when measurement becomes theatre.

Here’s a useful thought experiment: imagine your testing dashboard disappeared tomorrow. What information would leadership actually need to decide whether to ship? That’s what you should be reporting.

The Real Cost of Incomplete Measurement

This is where incomplete measurement becomes genuinely dangerous.

When Tier 2 metrics (activity) dominate your dashboard whilst Tier 1 metrics (business risk) are absent or buried, you’re not just failing to prevent issues—you’re actively preventing the organisation from recognising there’s a problem to solve.

Let’s talk money, because that’s the language leadership understands. According to the American Society for Quality, the Cost of Poor Quality is often estimated to range from 5% to 30% of sales revenue. Some organisations see quality-related costs of 15–20% of revenue, with extreme cases reaching 40% of total operations. For a company generating £100 million annually, that’s potentially £20 million lost to rework, customer compensation, emergency remediation, and missed opportunities.

A dashboard reporting “95% test pass rate, 1,200 automated tests executed, zero critical defects” looks reassuring. It justifies your department’s budget. It demonstrates tangible activity. But if that same dashboard is silent on defect escape rates, customer-impacting incident trends, or time-to-detection for revenue-affecting issues—then leadership has activity theatre, not risk intelligence.

The metrics aren’t lying. They’re answering a question nobody asked: “Are testers busy?” rather than “Is the business protected?”

TSB’s £330 million failure was entirely predictable—and they aren’t alone. If you need evidence that investment alone doesn’t equal quality, consider RBS in 2012. They were spending over a billion pounds a year on IT infrastructure. But when a routine software update to their batch processing scheduler went wrong, and the team rolled it back without testing compatibility, 12 million customers were locked out of their accounts for weeks. The regulator’s verdict was damning — huge fines followed. A billion-pound IT budget and the metrics that mattered were missing.

Both organisations had the dashboards that ticked all the activity metrics. What they lacked was insight into actual business risk.

The Question Leadership Should Ask Tomorrow

THE LEADERSHIP QUESTION

“Can your test leadership articulate the three biggest business risks your testing strategy is managing right now?”

If the answer is about test coverage percentages, pass rates, or execution velocity—you have a test factory measuring activity. If the answer is about specific business consequences of potential failures (revenue loss from payment issues, customer churn from poor user experience, regulatory penalties from data handling failures)—you have risk intelligence.

Know the difference. It’s worth millions.

The organisations that get this right don’t abandon metrics—they change what they measure and why. They shift from counting tests executed to understanding risks managed. From celebrating coverage percentages to interrogating what remains unknown. From automation headcount to time-to-insight for critical business scenarios. They understand that testing isn’t manufacturing—it’s investigation. And investigation can’t be measured by counting how many stones you’ve turned over. It must be measured by how well you understand the landscape underneath.

Your testing metrics should be making you uncomfortable. They should be highlighting uncertainty, not hiding it. If your dashboard only ever glows green, it’s not protecting you—it’s just another risk you haven’t recognised yet.

So which is it for your organisation—a testing problem or a measurement problem? For most, I’d wager it’s the latter. At your next Steering Group or Test Leadership meeting, replace one green metric with a discussion about untested business risk.

That single change will tell you more about quality than any dashboard ever has.